Multi-Robot Localization and Tracking

May 29, 2024

·

1 min read

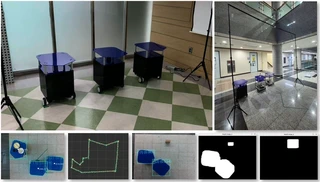

This project developed a centralized perception and coordination framework for multiple table robots in an indoor environment. For full project scope and team-level implementation details, see the project repository.

My Contribution

Localization

- Computed ICP-based LiDAR odometry and fused IMU, wheel-encoder odometry, and LiDAR odometry in an onboard EKF estimator.

- Estimated final robot states through confidence-weighted interpolation between robot-internal EKF estimates and ceiling-camera observations.

Vision system using ceiling-camera

- Estimated robot position w.r.t camera coordinates utilizing depth measurements and YOLOv8 (for robot detection and tracking), providing external information for robust robot localization.

- Identified indoor obstacles for ensuring safe and accurate path planning.

Outcome

- Validated the integrated system through a successful end-to-end demonstration in a real indoor environment.

- Established a practical localization stack combining onboard state estimation and infrastructure-based visual perception.

- Improved robustness of environment-aware multi-robot operation through joint robot and indoor-scene detection.

Multi-Robot System

Infrastructure-Based Perception

Multi-Camera Calibration

Navigation

Undergraduate

Authors

M.S. Student

I’m currently pursuing an M.S. in Artificial Intelligence at UNIST and working in the 3D Vision & Robotics Lab advised by Prof. Kyungdon Joo.

I received B.S. in Robotics from Hanyang University ERICA, South Korea in 2024.

My research focuses on sensor calibration, visual SLAM, and event-based vision for robotics.